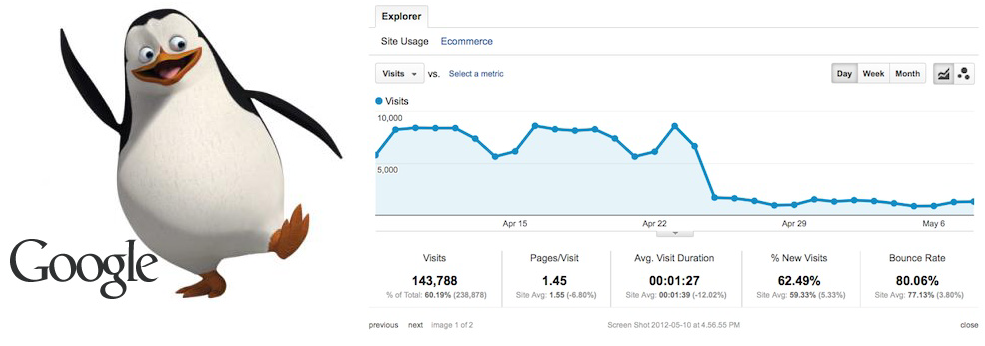

After such updates as Panda and Penguin, business owners and SEOs had to suddenly become more sophisticated and delicate in their SEO activities, which previously led them to success and lots of traffic and links. However, the most common result after these updates was a penalization and, consequently, huge traffic and money damages.

Below is the list of the most common issues for penalization, which you can (hopefully) avoid.

Duplicate content

Content duplication is divided into external and internal. External duplicates appear as the result of the distribution of similar content and submission to various low- and medium-quality sources. This tactic was extremely popular before the Panda update, after which Google massively devaluated the websites, engaged in such practices. Consequently, the links from such websites got treated as spammy and low-quality. If you still use it for link-building, try to submit articles to high-quality resources, previously changing a couple of paragraphs and other elements to make it look different.

However, you may also be banned for duplicate content not because you continue distributing it among low-quality sites, but because your competitors copy your content and post it on their pages. This will cause the situation when either their or your page will appear in the bottom of SERP. You may check whether your content is being scraped or not with the help of Copyscape. If it is – report it here.

Remember that not only distribution of content, but its presence on your own website can cause penalization. Though it would not be penalization in its broad sense: if many of your website pages are semi-identical, the search engine will not be able to determine which page is preferred and which should appear in SERP. Here you’ll find one of the best and most complete guides to fight internal duplicates. If you use site-wide templates with tons of similar links in the sidebar and too little unique text, chances are a search engine will treat such pages as duplicates. Thus, make sure that all the pages are dissimilar and bring value to user.

Keywords stuffing

Straight from Google’s guidelines:

“Keyword stuffing” refers to the practice of loading a webpage with keywords or numbers in an attempt to manipulate a site’s ranking in Google search results. Often these keywords appear in a list or group, or out of context (not as natural prose).

For example: We sell custom cigar humidors. Our custom cigar humidors are handmade. If you’re thinking of buying a custom cigar humidor, please contact our custom cigar humidor specialists at custom.cigar.humidors@example.com.

Guess which keywords are being targeted.

The same situation may be visible in such code elements, as the Meta tags, titles, h1 tags, etc. Try to use synonyms and keyword variations, instead of stuffing the page with one particular keyword. There’s no perfect keyword density, approved by Google – so just make sure the keywords are integrated naturally.

Promotional anchor texts

Although it’s not stated directly in Google’s guidelines, the distribution of anchor texts and the naturality of the backlink profile has become extremely important after the Penguin update, as the websites with promotional backlink profiles were almost excluded from SERP.

If most of your anchor texts are similar to “buy seo software” or “beautiful cheap tables”, i.e. too advertising – beware. After the Penguin update, most webmasters and SEOs tidied up their backlink profiles by contacting websites owners asking them to change the anchor text or remove the link completely, and then submitting reconsideration requests to Google. Some websites even asked for money for links removal. However, Google just wanted to demonstrate that a backlink profile should look natural and include links from relevant resources of different quality and diversified anchors, including nofollow links, links with anchors “click here” or “learn more” – the ones, a typical user or a webmaster would use to insert.

Low-quality backlinks

Although diversity matters, you should avoid low-quality websites, link farms and participation in other link schemes.

Try to avoid:

- Links inserted into articles with little coherence;

- Low quality directory links or bookmarks;

- Links in footers of various sites;

- Spammy forum and blog comments;

- Links on so-called “partner pages” or “useful resources”

Probably, links in guest posts will be devalued in the nearest future, so if you follow poor guest blogging practices like submitting shallow content to low-quality blogs, perhaps it’s high time reviewing your link building strategy.

Cloaking

Again, straight from Google’s website:

“The term “cloaking” is used to describe a website that returns altered web pages to search engines crawling the site. In other words, the web server is programmed to return different content to Google than it returns to regular users, usually in an attempt to distort search engine rankings. This can mislead users about what they’ll find when they click on a search result. To preserve the accuracy and quality of our search results, Google may permanently ban from our index any sites or site authors that engage in cloaking to distort their search rankings.”

If your pages display different information to search crawlers and users, then you are cloaking. The page, displayed to search engine robots (cloaked), is stuffed with keywords and phrases, whereas the page for users is displayed normally and has average optimization rates.

I’d like to finish with probably the most quoted recommendation from Google: “Make pages primarily for users, not for search engines.” After the latest updates it’s become obvious that Google will sooner or later penalize every deceiving website owner, even those who are unintentionally and indirectly involved in manipulative tactics.